10 Milliseconds Too Many

How investigating a tiny delay revealed a risk we could fix before users ever noticed.

March 2023. We were preparing the company’s IT systems for a migration to the cloud.

The new infrastructure required us to redirect all traffic through a firewall, adding about 10 milliseconds of latency to every call.

Without deeper analysis, this could have turned into severe damages for our business.

A System That Felt Fast Enough

A couple of years ago, our e-commerce company decided to move all its IT systems from on-premises infrastructure to the cloud. This included build-chain tools, standalone applications running on VMs, but also databases and microservices. A dedicated task force was created, and I was lucky enough to be part of the team working on this challenging program.

While designing our future cloud architecture — and because we relied on some services provided by our parent company — it appeared that all traffic had to be routed through a dedicated firewall. In practice, this meant that any service calling another service would now go through an additional network hop.

The 10 Milliseconds That Didn’t Fit

All systems were located within the same region in Switzerland, and the firewall itself was relatively close — only a few hundred kilometers away. Including computation and networking latency, the estimated overhead was about 10 milliseconds per call, which is usually considered negligible.

However, we soon realized this applied to every single call in our microservices architecture, including database calls. We started to feel skeptical. For some reason, I was particularly concerned about unexpected impacts on our logistics production, although it was difficult to clearly explain or justify that intuition at first.

Following the Delay

To validate my concern, I proposed running a short analysis. Some of our systems are performance-critical. The most notable example is the picking system used in warehouses to prepare orders. Employees move quickly between shelves, and it is essential that their scanner devices respond instantly and reliably.

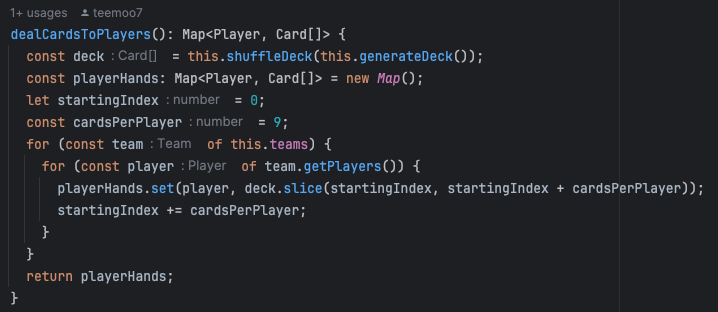

I spent some time investigating the calls made by the picking system. The workflow is more complex than it appears: it handles orders (which to pick first), item information (descriptions and locations), stock management, warehouse organization (areas and timing), and, of course, security layers on top.

Understanding the Real Risk

I selected a few random requests in our APM (Application Performance Management) tool and analyzed the traces rather than logs or metrics.

In observability terms:

- metrics are aggregated numerical measurements over time (e.g., CPU usage), ideal for alerting;

- logs are detailed, timestamped records of discrete events within a component;

- traces map the end-to-end journey of a request across services — exactly what I needed.

My intuition proved correct. Depending on the use case, I observed between 18 and 27 underlying calls. Running on-premises, a request typically took about 250–300 ms end-to-end. Adding 10 ms to each subcall would increase total latency by roughly 200–250 ms — almost doubling response time.

It would be tempting to question why a single use case required so many subcalls. That would be fair — in theory.

We often underestimate how many database calls systems perform because we try to avoid tight data coupling or overly large DAOs.

In distributed microservices architectures, multiplying calls is part of the trade-off compared to monolithic systems.

But that was not the goal of this exercise. We were not redesigning the architecture; we were migrating existing systems safely and transparently, without forcing the business to pause operations.

It would also be incorrect to assume users would not notice an additional 200 ms of latency. Although perception varies, it is generally accepted that changes below 100 ms are rarely noticeable in typical applications. Systems responding within 200–300 ms feel acceptable. But once latency approaches half a second, interactions start to feel slow — sometimes unacceptable.

Side note: if it’s hard to imagine what 100 ms feels like, try this lag simulator. Even 50 ms can feel surprisingly uncomfortable in certain situations.

Lag simulator 2018 by zorndyuke

Fixing a Problem Before It Existed

I presented my findings to the cloud migration team, and people were surprised. What initially appeared as a negligible addition was actually threatening to slow warehouse operations and negatively impact our colleagues’ daily work — and potentially even their compensation, which partly depended on performance.

Fortunately, after several discussions, our Head of Infrastructure managed to remove the supposedly mandatory firewall requirement for internal service calls.

What 10 Milliseconds Taught Me

One important lesson from this experience was to trust your intuition when something feels wrong, even if you cannot immediately explain why.

Spending a few extra hours validating a concern can be far cheaper than ignoring it and paying the consequences later.

The second takeaway is that small, seemingly insignificant costs can become substantial when multiplied — or amplified — by system design decisions.

This experience reinforced my conviction about the importance of strong observability practices. Since then, I have spent increasing amounts of time exploring these tools to detect slowdowns, duplicated calls, and optimization opportunities.

With the rise of AI-assisted analysis, identifying such issues in system design and code is becoming even easier — making observability an area where investing effort truly pays off for building stable and efficient systems.

10 Milliseconds Too Many was originally published on Medium, where people are continuing the conversation by highlighting and responding to this story.